Over the past dozen years of performing energy audits and assessments, I have learned there are three root causes that warrant an audit: 1. there is a problem with the house, 2. there is a problem with the equipment/appliances or, 3. a problem with the occupants. Often these problems require testing the electrical system and equipment to determine usage and associated costs. In this post, I’ll describe a few methods for determining how much cost is associated with electricity usage.

Key terminology

Voltage is the difference in electrical potential energy between two points per unit electric charge. More simply, it is a pressure that pushes charged electrons through an electrical wire or circuit, much like water pressure in a plumbing system. Almost all voltage in U.S. residential construction will be 120/240 volts. Often represented by the letter E for electromotive force.

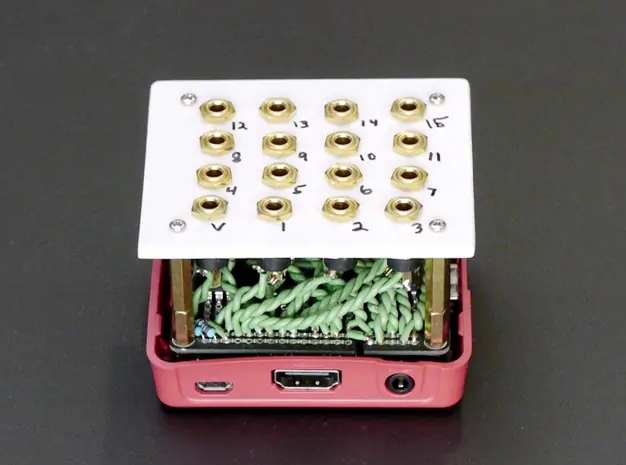

Amperage is the strength of an electrical current, often referred as an amp. Amperage would be water flow as gallons per minute in our plumbing analogy. Devices such as breakers or fuses, and wire sizing are all based on the amperage of a circuit; 100- to 200-amp main electrical service panels are common sizes in residential construction and 15- or 20-amp branch circuits are common for lighting and plug loads. Current is represented by the letter I.

Wattage is the rate of work and is represented by the letter P. It’s a measurement of usage, much like tracking water use with a water meter. Wattage is the measurement of how electricity is billed and is the most useful electrical information to an energy auditor. Electricity is billed at a kilowatt hour (kWh), or 1000 watts used in an hour.

Resistance is a measure of the difficulty to pass an electrical current through a conductor. The concept is similar to friction…

Weekly Newsletter

Get building science and energy efficiency advice, plus special offers, in your inbox.

This article is only available to GBA Prime Members

Sign up for a free trial and get instant access to this article as well as GBA’s complete library of premium articles and construction details.

Start Free TrialAlready a member? Log in

One Comment

Amazing article! Thank you.

Log in or become a member to post a comment.

Sign up Log in