Extrapolating Manual J to Different Conditions

In an attempt to make HVAC equipment comparisons a bit more data-driven, I’m trying to do a rough first-order “simulation” of equipment performance under actual conditions. I can get hourly weather data (1) and equipment performance specifications under varying conditions (2), and I have a Manual J load calculation. The weather data is simple enough to work with if I only consider outdoor dry bulb temperature, and the equipment performance data is easy enough to do a linear interpolation between the reported datapoints. However, I’m not certain how to extrapolate the Manual J data.

As an example, if my Manual J heating load is 20k BTU/h at an indoor setpoint of 70F and an outdoor dry bulb of 10F, the temperature difference is 60F, giving a naive 333BTUper degree-hour below 70F (difference between indoor and outdoor). However, that doesn’t intuitively seem right — I’m certainly not running the heat on a summer night when it finally drops to 65F, or on a sunny spring or fall day — in short, this method of calculating the load per degree ignores any internal heat sources or thermal inertia. In Dana’s equipment sizing article (3), he mentions the concept of a “balance point” temperature, which is quoted as typically between 60 and 65 (lower for better levels of insulation). If I re-calculate using a 60F “balance point” rather than a 70F indoor setpoint, I get 400BTU per degree-hour below 60F (difference between balance point and outdoor).

Both of these methods have the same load at the outdoor design temperature, but the outdoor design temperature is by its nature atypical. In this example, at a quite common outdoor temperature of 30F, the first method gives a heating demand of 13320BTU/h, while the second gives a demand of 12000BTU/h. At an outdoor temperature of 40F, the difference is almost 2k BTU/h. These differences aren’t huge in an absolute sense, but do add up, it’s 10-20% off and when equipment COP at varying outdoor temperatures is taken into account, can amount to 500kWh/year difference

I suppose the real answer is that there’s no such thing as an accurate rough estimate, and “it depends” based on lots of other external factors (wind, sun, internal loads, “thermal mass”, seasonal temperature tolerance variation, etc.) — but does anyone have insight into a good way to extrapolate Manual J loads into a BTU/degree-hour number and a temperature baseline(s) that can be used to estimate load at various conditions?

References:

(1) Historical and average weather data: https://www.ncei.noaa.gov/maps/hourly/

(2) Example equipment performance specs: https://ashp.neep.org/#!/product/32101

(3) Equipment sizing article: https://www.greenbuildingadvisor.com/article/out-with-the-old-in-with-the-new

Note: Why am I doing this? Because simply comparing SEER / HSPF numbers isn’t nerdy enough, and is also not representative of performance under conditions different than the test conditions. As an example, with the numbers I was playing around with last night, a unit with the highest HSPF simulated worse than a unit with a lower HSPF (though the lowest HSPF unit did stay in last after simulation).

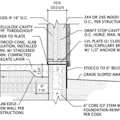

GBA Detail Library

A collection of one thousand construction details organized by climate and house part

Replies

Bumping this thread because I was just getting back to this last night -- Any thoughts on extrapolating manual J numbers to heating / cooling load at other temperature points?

Jonny,

What you are doing is a great exercise, this is how we learn. Heat pumps have added another level of complexity (in a good way) to make accurate HVAC sizing calculations. In my reading of many GBA articles there seems to be a general mistrust of Manual J for sizing HVAC. Can you write a performance program using your building specifications and yearly weather data, bypassing Manual J. Is Manual J able to digest the nuances that come with heat pump performance, balance points and building thermal integrity.

Hi Doug,

I'll share anything useful that I come up with!

I'm not exactly trying to replace, or distrust, Manual J -- just I don't think that existing measures adequately represent performance under different conditions. As a quick example -- imagine that my Manual J calculations are "perfect" (ie, the calculated and actual heat demand, under design-day conditions, are the same), and I'm trying to choose between two heat pumps with identical (and accurately reported) nameplate capacity and HSPF. HSPF is calculated in zone 4 average conditions, but I live in zone 5, and it's entirely possible (even likely) that these two "identical" units would perform very differently in my actual climate conditions. What I'm trying to do is take the performance data available to me (from the NEEP ASHP directory -- just a few points but better than nothing!) and apply it to actual climate conditions in my region to come up with something like a regionally-adjusted HSPF / COP.