Energy impact with high temp water heater and thermostatic mixing valve?

How much more or less energy will my water heater use if I increase the internal setpoint and install a thermostatic mixing valve?

Okay, let’s say I have a bog-standard electric resistive water heater and it is set to 120F to prevent against any accidental scalding but still at least prevent Legionella from breeding.

I then install a thermostatic mixing valve above the heater and bump the internal setpoint to 160F. The valve is set to mix in enough 55F cold water to produce a 120F flow into the house. This will have several notable benefits, including a notably higher output of usable hot water without having to get a bigger tank and the complete elimination of any Legionella.

Using a calculator from OmniCalculator site, I use 400lbs for roughly 50 gallons of water and I’m heating 55F water to 120F with 100% efficiency. That takes 7.6 kWh. Switching the end result to 160F takes 60% more energy — 12.3 kWh. Oof.

But remember that I’m not using as much hot water anymore! If my internal setpoint is 160F; my cold water is 55F; and my valve setpoint is 120F, then 160p + 55(1-p) = 120 gives me 60% hot water mixed with 40% cold to get my result. Put another way, I am only needing 60% of a gallon of heated water to get my gallon of desired hot water (since the rest of the heat in that gallon is coming from the natural heat in the “cold” water).

I think I can then redo my calculation with 400lbs * 0.6 = 240lbs of water I need to heat and re-running the numbers, I now see only 7.3 kWh. That’s less energy than the baseline!

How realistic is this? Is my logic or math off anywhere? Has anybody done any real world testing on this and can report actual numbers?

(This question was reposted without the link to the calculator, since this forum doesn’t like links)

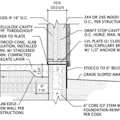

GBA Detail Library

A collection of one thousand construction details organized by climate and house part

Replies

The energy required to supply the desired temp will be the same. The standby losses of the tank will increase however as the temp increases.

Paul is correct. It takes a roughly linearly increasing amount of energy to increase the temperature of a given volume of water more and more, up until the point of phase change (water changes to steam), where there is a big discontinuity. That means that to heat half as much water twice as high in temperature takes roughly the same amount of energy as heating the full amount of water half as many degrees.

Running the tank hotter uses more energy to make the water hotter, but since you now use less total volume of water due to the mixing valve, you end up using somewhere pretty close to the same amount of energy to make the amount of hot water that you actually use. The downside is that keeping the tank hotter ALL THE TIME means you'll have more standby losses ALL THE TIME since the function of how much total energy gets through the insulation of the tank depends in part on the starting temperature of the water inside the tank.

The short answer is that cranking up the temperature on the tank, then using a mixing valve to deliver more reasonable water temperatures to your home will do two things for you:

1- You'll have a higher effective volume of "hot" water to use, which means you can do nice things like take longer showers before running out of hot water. This is the reason people usually opt for higher temperatures on the tank and thermostatic mixing valves: it's a way to get more useful hot water capacity without getting a bigger water heater.

2- You'll use MORE total energy over time, because of the higher losses of the water heater running at the higher temperature.

If you are only trying to save energy, I wouldn't go forward with your plan.

Bill

Thanks Paul and Bill. Yeah, I wasn't expecting to save energy on this and was just surprised to see the math suggest that that might be the case. I am looking into this for the two reasons I mention in the question: more effective volume from a smaller tank and the elimination of Legionella. The bacteria case matters to me specifically because this will be used by an elderly couple and I'm concerned about the immune response from "non-breeding" Legionella vs practically to completely none. I just didn't want to use notably more energy going the mixer route.

Standby losses did come to mind, but I've had a very difficult time finding any numbers that I could effectively use. Some estimates mention 1,200 kWh annual on average -- but that's 100 kWh a month and my current HPWH only requires 56 kWh/mo for its operation so I have no idea what parameters that "average" is based on. I can also say that my HPWH pretty much never goes on except right after a hot water draw... but no, I don't know how much of the resulting energy is used to heat up the incoming water vs "re-heating" the slightly cooled tank water. This new tank isn't HPWH, though, so it's not going to have as much insulation... but maybe a $50 insulation blanket would come close?

Would the standby losses be roughly linear, too? If so, maybe it would be helpful to consider that the "baseline" unavoidable losses 55F to 120F will be notably more than the new losses from 120F to 160F? Hmmm...

Standby losses are also linear: it’s water temp minus ambient air temp that gives you the delta T. I think ballpark, they’re pretty small. A 50 gallon heater comes with about R-16 across 30 ish sqft of insulation, so about 240 kWh a year for a resistance heater in a 70 degree space. For a heat pump, probably around 60kwh a year.