Using renewables on-site vs. grid-tie

I have a question that I am hoping may lead to some debate. This is a theoretical question and not tied to a specific project that I am working on.

I was talking with a senior energy expert at the Department of Energy about reaping the benefits of onsite renewables, e.g. solar panels on your roof, wind turbine, solar thermal, etc.

With electricity in particular, if that electricity is feeding into the grid we were discussing the difficulty in accounting for environmental benefits. The cost of transmitting electricity through the grid is very high—about 2 out of 3 watts are lost in the process. In addition, it’s hard to know if the 2 kW that you fed into the grid at 12 p.m. really resulted in the the utility being able to reduce their coal-fired output by 2 kW. In fact, there are a lot of reasons to conclude that nothing of the kind happened. And that’s to say nothing about what happened at 2 p.m. when a thunderstorm rolled in and you needed that coal-fired juice to run your A/C and lights, while you solar panels twiddled their thumbs.

The point of our conversation was that the best use of onsite renewables, from a thermodynamic and environmental point of view, is onsite.

My question is — should we be more real with homeowners and building owners about the hard-to-see benefits of grid-tied renewables? Where does this lead? Toward more off-the-grid homes? Homes with some systems off-the-grid and others on the grid? Homes that use PVs to make ice for off-peak cooling? Does this tell us to move away from PV to solar thermal?

Or, do you disagree with the premise?

Thanks in advance for your thoughts.

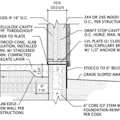

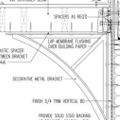

GBA Detail Library

A collection of one thousand construction details organized by climate and house part

Replies

Great points and an interesting topic Tristan.

While a few kW of PV isn't going to shut down fossil fueled power plants that take hours to start and stop, a lot of distributed energy would have some benefit by reducing the load and therefore the fuel consumption of those plants. Hydro and natural gas plants are particularly good at following demand and should allow the most benefit from solar and wind systems. Also, while off grid systems completely remove the building's load and therefore many such buildings may allow a plant to shut down, all the batteries required probably offset the environmental benefit.

I don't believe that 2/3 of electricity is lost in transmission. Electricity generation is only about 1/3 efficient, but I thought grid losses were 10-15%. Anyway by reducing demand through efficiency or on-site generation, you effectively save more than what you generate because you are also removing the losses and power plant inefficiencies associated with that power so primary power savings might be 3 times the on-site savings.

Good correction, about 9% of losses are from distribution, while the rest occur at the point of generation. That does change the emphasis.

if you generate excess power at home and feed it to the grid, it is going to basically feed your neighbors (or whichever neighbor is on your power pole transformer) there should be little loss in that short distance.

in nearly all casses your 240V line is spliced with their after the transformer so it doesn't even see transformer losses for the most part

also remember that for every watt your neighbors uses that you produce, they are not drawing that watt all the way from the power plant, hence line savings.

i guess if all your neighbors all excess generating or you live remotely and are sending the excess far, their could be some loss. net metering should discourage most of the abuse as you can't gain anything by sending too much.

you could have people who want to experiement with solar go with a few panels and microinverters, enough to cover their general base load at noon. then you don't have to worry about what happens to your excess or mess with net-metering and such. i'm running a single panel as such on a small home. nice for an area that doesn't have all the free perks of running solar, ie rebates or utility credits. my utility is a bit hostile; the cost of setting up and the extra fees almost negate the cost benefits.

I'm a distribution engineer at a large electric utility, so I can offer what I know about our system.

Damon Lane is correct in noting that grid losses are much smaller. Even the 9% number that Tristan submitted in comment #2 seems unreasonably high, and I suspect it is an averaged figure that contains Transmission-to-Distribution voltage transmission losses. With residential-sized onsite renewables, these losses will rarely be seen. Most of the losses on a distribution line itself come from the transformers serving customers, and these rarely account for a loss greater than 5% or so. Damon and Bob are also correct in noting that excess power produced by an onsite renewable source will be consumed by neighbors and other users connected to a distribution circuit. The fact that power does not need to flow all the way back to a generating plant is actually the main reason why renewables are so beneficial to a utility system - the losses in transmission, distribution, and transformation are eliminated, therefore making the total power required to be produced at a generating plant much less than the offset demand provided by the renewable.

I do have a minor point to make about client education, though. In most parts of North America, utility customers are currently billed only for total electric consumption. This makes the creation of a "net-zero home" feasible - total production of a renewable can be greater than the consumption of a home over a period of time. However, as we should be well aware, most onsite renewables cannot meet a home's demand at all times, especially during the times of highest loading. Utilities must provide this marginal power to a grid-tied home, and as renewables become more widespread utilities will begin to bill for this benefit via real-time pricing, time-of-use rates, or something similar.

In other words, a net-zero home might lead to no electricity bills today, but it is possible (even probable) that the electricity bills will return as utilities learn how to more properly bill their customers for the benefits they provide.

Ben Wilson,

The smart grid will supposedly bring real-time pricing and time-of-use (TOU) rates. That's another benefit of solar PV that you're overlooking. Solar PV has the highest output during the most expensive TOU time frame.

According to the following article, an equitable TOU rate structure will reduce the size of the PV array on a net zero house by 28% : " During the sunniest times of the year, when a PV system is generating the most energy, the value of this energy in terms of retail price credits is much higher using a time-of-use electric rate schedule. This is a key reason why PV systems are financially attractive. This enables one to zero out the electric bill by installing a smaller system (about 28% smaller"

http://www.gosolarnow.com/NetMetering.html

So I have to disagree with your assertion that "a net-zero home might lead to no electricity bills today, but it is possible (even probable) that the electricity bills will return"

On the contrary, once the smart grid gets dialed in (not expected anytime soon) today's net zero energy home will create 28% more dollars than it uses. Most net metering schemes, I realize, pay wholesale prices or zero for the electricity generated above net zero.

Kevin,

You make some good points, and it is possible that TOU rates in the right environment will make solar installations even more attractive in those areas. I should also clarify that a net-zero home whose designers make the thermal envelope a higher priority than the PV array are much less likely to experience higher costs due to TOU rates. However, it has been my experience that many net-zero homes have been built that still require (or at least use) A/C during the summer and electric heating during the winter.

The solar net-zero homes I have worked with are all in the Midwest, and they all have followed the same pattern of producing far more energy than they consume during Spring and Fall and less energy than they consume during Winter and Summer. The fact that PV produces more energy during the summer is more than offset by the usage of A/C in many of these homes. At the end of the year, the Spring and Fall surplus cancels out the Summer and Winter deficiency. With TOU rates, however, cancelling out may not be enough.

Most of the builders/designers/architects who frequent this site seem interested in creating truly well-built and energy efficient homes. Unfortunately, many of the "green" homes that I have worked with have been just green enough to smack a big PV array onto in order to make it net-zero. My caution of returning electricity bills may apply only to these bare-minimum buildings, but I believe that it is something to be aware of for all builders.

Agreed, and here's two examples of zero energy homes, but it's pretty easy to spot which one is greener:

http://www1.eere.energy.gov/buildings/challenge/pdfs/Solar_Harvest.pdf

http://www.nrel.gov/buildings/pdfs/43188.pdf

TOU rates won't make more if the electric companies bill as they do now.

they often pay 'market rates' for your excess while they still bill customer rates for what you use; market rates can be half the customer rate and they don't pay you back the distribution fee as they are doing that.

the electric company is getting its fee through the fixed fees on the bill. in my area they keep raising the fixed fee while leaving the remainder alone. that and charging much more for using net metering, ie a higher account charge, and passing on the high costs of the additional equipment to the user.

It would be foolish to think that major power companies would not make money on the power you are generating...........